Learn How To Optimize Your Robots.txt File In 5 Minutes

Last updated: July 11th, 2014

So you want to become a robots.txt rockstar eh? Well, before you can make those web spiders dance to your rhythm, there are a few basic principles that you should be familiar with. Create your robots.txt file incorrectly and you will be in a world of hurt. Do it properly and the search engines will love you.

What Is A Robots.txt File?

It is an “instruction manual” the web crawlers (Google, Bing, etc..) use when when visiting your website.

The robots.txt file is instructing the various search engine bots/crawlers/spiders where they can and cannot go on your website. You are telling these bots (Google, Bing, etc..) what they are allowed to “see” on your website and what is off limits.

Your robots.txt file is the police officer at a traffic stop and the cars are the web crawlers/spiders.

Make sense? Good.

Why Do I Need A Robots.txt File?

An often neglected part of SEO, the robots.txt file is something that people tend to whip together in a hasty fashion. Maybe it’s because it tends to be one of the last items in a website launch list (shouldn’t be…. but you know….) or maybe people in general are just lazy. Are you a lazy webmaster? I hope not…

What Could Go Wrong If I Don’t Use This Robots.txt File?

Without a robots.txt file, your website is:

- Not optimized in terms of crawability

- More prone to SEO errors

- Open to sensitive data being seen

- Easier for ill-willed users to hack the website

- Going to suffer behind the competition

- Going to have indexation problems

- Going to be a mess to sort out in webmaster tools

- Going to give off confusing signals to the search engines

- & more…

Let’s Begin: Creating Your Robots.txt File

Minute #1: Do You Already Have A Robots.txt File?

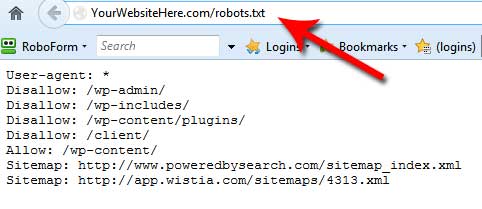

It would be a good idea to determine if your website currently has a robots.txt file to begin with. You may not want to override anything that is currently there. If you do not know if your website has a robots file, simply visit your website followed by “robots.txt”. An example of how this would look is:

www.mywebsite.com/robots.txt

Replace the “mywebsite” portion with your own domain name of course.

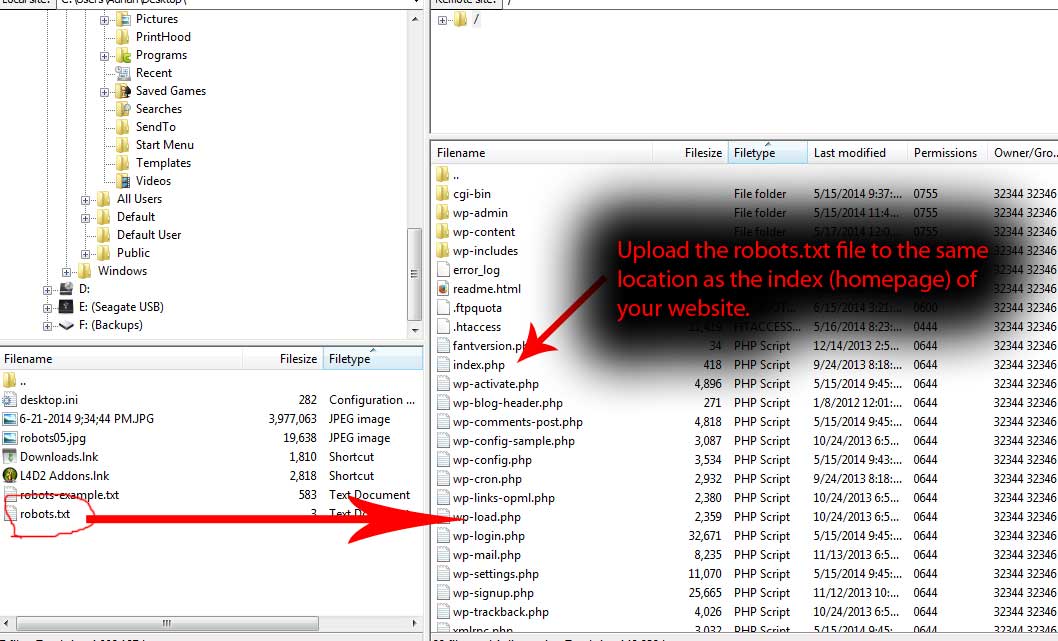

*Note: The location for the robots.txt file must always be in the “root” or “home” level of your website, meaning it should be in the same folder as your homepage or index page.

If you see nothing when you visit that URL, your website does not have a robots.txt file. If you DO see information however, you DO have a current robots file. In this case, when you go to edit or add any rules (shown below), be sure not to delete anything you currently have as it may “mess up” your website.

To be safe, make a backup copy of your robots.txt file before you begin to edit it. When it comes to working with digital files, I have three words for you: ALWAYS MAKE BACKUPS

Minute #2: Starting Your Robots.txt File

Creating a robots.txt file is as easy as getting out of bed. Okay, well for me getting out of bed is difficult, but I digress.

To create a robots.txt file, simply open up any kind of text editor. It is important you do not use WYSIWYG software (web page design software), as these tools may add in extra code which we do not want. Keep it simple and use a text editor. Common ones include:

- Notepad

- Notepad++

- Brackets

- TextWrangler

- TextMate

- Sublime Text

- Vim

- Atom

- etc..

Any of these programs will do and since your PC comes with Notepad by default, you might as well use that for this tutorial.

With Notepad open, begin to enter in your “rules”. Once you have entered your rules, you save the file by calling it “robots” and you make sure it is saved with the extension of “Text Documents (*.txt)”.

What kind of “rules” should you enter into your robots.txt file? It depends on what you want to accomplish. Before you enter your rules, you need to decide what you want to “block” or “hide” from being crawled on your website. Folders on your website that have no need to be crawled and indexed in the search engine results include things such as:

- Site-search pages

- Checkout/Ecommerce sections

- User log-in areas

- Sensitive data

- Testing/Staging/Duplicate data

- etc..

With this information on hand, setting up your rules is easy. Let’s take a look at exactly how we do this.

Understanding The Rules of a Robots.txt File

When it comes to the robots.txt file, there is a standard format for creating your rules.

1) Asterisks are used as a wildcard: *

2) To allow areas of your website to be crawled, the “Allow” rule is used

3) To disallow areas of your website to be crawled, the “Disallow” rule is used

Let’s say that you owned a website (which you probably do??). On your website, (let’s call it mywebsite.com) you had a sub-folder which contained duplicate information/testing material/stuff you want to keep private. Maybe you had this sub-folder setup as a staging or testing area. Let’s call this folder “staging”. Your robots.txt file would look something like this:

User-agent: *

Disallow: /staging/

Pretty simple isn’t it? Let’s take a look at what’s going on here.

We start off our robots.txt with User-agent: *

The User-agent definition addresses the search engine spiders and the asterisk is used a wildcard. This rule therefore is instructing ALL spiders from ALL search engines, that they need to follow ALL rules that are to come afterwards.

This would be the case until another User-agent declaration is declared further in the robots.txt (if you had to use it again). What comes afterwards?

The very next rule is:

Disallow: /staging/

This disallow rule is telling the search engine spiders that they are not allowed to crawl anything on your website which resides in the “staging” folder. Using the name of our imaginary website, this location would look like so: www.mywebsite.com/staging/

*Tip: Keep in mind that just because you disallow a certain section of your website from being crawled, it may still show up in the index of search engines IF it was previously crawled AND if you allowed those pages to be indexed.

To be certain that this doesn’t happen, it is best to combine the disallow rule with “noindex” meta tags added to your webpages (more on that further down the page). If the pages you do not want crawled are already displayed within the index of a search engine, you may have to remove them manually through the webmaster tools area of the respective search engines (Google/Bing).

Simple, wasn’t it?

A Few Examples That You May Use

What you see below are a few robots.txt examples showing different rules for different situations. Not everyone’s robots.txt file is going to be the same. Some people may want to block their entire website from being crawled. Others may only have a need to restrict certain sections of their website.

Allowing Everything On The Website To Be Crawled & Indexed

User-agent: *

Allow: /

Blocking The Entire Website From Being Crawled

User-agent: *

Disallow: /

Blocking A Specific Folder From Being Crawled

User-agent: *

Allow: /myfolder/

Allow A Specific Spider/Robot (in this case Google) To Access Your Site & Deny All Others

User-agent: Googlebot

Allow: /

User-agent: *

Disallow: /

Extra: A WordPress Specific Robots.txt File

When it comes WordPress, there are three main standard directories in every WP install. They are:

- wp-content

- wp-admin

- wp-includes

The wp-content folder contains a sub-folder known as “uploads”. This location contains media files which you use (images, etc..) and we should NOT block this section. Why should you care about this?

Well, when you “disallow” a folder in robots.txt, by default – all sub-folders underneath it are also blocked by default. So, we have to make a unique rule for this media sub-folder.

Your WordPress robots.txt file might now look something like this:

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-includes/

Disallow: /wp-content/plugins/

Disallow: /wp-content/themes/

Allow: /wp-content/uploads/

We now have a very basic robots.txt file which blocks the folders of our choice, but still allows the sub-folder “uploads” to be crawled within “wp-content”. Make sense?

If you disallow folder “xyz”, every folder which is under/placed inside “xyz” will also be disallowed. To specify individual folders that should be crawled, an explicit rule must be given.

Here is what you can use to start your WordPress robots.txt file. The oddball in this group which you may not have seen before is “Disallow: /20*”. This disables the date archives starting with the year 20.

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-includes/

Disallow: /wp-content/plugins/

Disallow: /wp-content/cache/

Disallow: /wp-content/themes/

Disallow: /xmlrpc.php

Disallow: /wp-

Disallow: /feed/

Disallow: /trackback/

Disallow: */feed/

Disallow: */trackback/

Disallow: /*?

Disallow: /cgi-bin/

Disallow: /wp-login/

Disallow: /wp-register/

Disallow: /20*Allow: /wp-content/uploads/

Sitemap: http://example.com/sitemap.xml

*Tip: It is always a good idea to include a link to your sitemap from within the robots.txt file. Your sitemap should also be placed at the “root” of your website (same folder as your homepage file).

Minute #3: A Cautious Warning / Checking For Errors

You will want to make certain that you did not make any typos. A simple typo could mess up your file, so be sure that you spelled everything correctly and have the proper spacing.

It is also important to note that not all spiders/crawlers follow the standard robots.txt protocol. Malicious users and spammy bots will view your robots.txt file and look for sensitive information such as private sections, sensitive data folders, admin areas, etc.. While many people DO list these folders/areas of their website within the robots.txt file (and it’s okay 99% of the time), to take it an extra step further, you could omit placing these in your robots.txt file and simply hide them via the use of a meta robots tag.

So, if you want to be extra safe and use block your sensitive pages using meta tags, this is how you do it:

- Open up your website editing program (whatever you use to design/edit the actual website)

- Using the “code view” or “text view” of your editing software, you will enter the following code between the “head” tags of the page (top part of the page).

<meta name=”robots” content=”noindex, nofollow”>

When viewing the source code of your page, it should look something like this now:

<html>

<head>

<title>…</title>

<meta name=”robots” content=”noindex, nofollow”>

</head>

… and that is how you easily block your pages from being indexed and crawled!

Minute #4: Uploading To Your Website

Of course, none of this is serve any purpose if you do not upload and save the file back to your website. So be sure and save your robots file as robots.txt and if you chose to use the meta-tag option as well, be sure and re-upload those webpages after you edited and saved them.

Minute #5: Viewing The File Online

The last step in this simple process is to view the robots.txt file in your web browser and to also make sure that your newly saved pages (if you used the meta-tag) are also updated. To be on the safe side, refresh your page or clear the cache & cookies on your computer before you do this.

A Helpful Resource:

For a nice list of web-crawler user-agent names, please visit: http://www.robotstxt.org/db.html. Here you can pretty much find any crawler “name” and add it your allowed/dis-allowed list… most folks won’t need this though.

Conclusion & Closing Thoughts

So now you have the power and knowledge to effectively create and optimize your robots.txt file for your website – awesome! There is so much more to learn though. The robots.txt file is only one of hundreds of items that we use on a daily basis for our clients and making sure that we stay ahead of the curve.

If you want us to take a look at your website’s optimization and skyrocket your website’s ranking, be sure and get in touch for a free 25-minute marketing assessment. We’re always happy to help!

Any questions? Be sure and leave your comment below and I’ll get back to you as fast as lightning.

What you should do now

Whenever you’re ready…here are 4 ways we can help you grow your B2B software or technology business:

- Claim your Free Marketing Plan. If you’d like to work with us to turn your website into your best demo and trial acquisition platform, claim your FREE Marketing Plan. One of our growth experts will understand your current demand generation situation, and then suggest practical digital marketing strategies to hit your pipeline targets with certainty and predictability.

- If you’d like to learn the exact demand strategies we use for free, go to our blog or visit our resources section, where you can download guides, calculators, and templates we use for our most successful clients.

- If you’d like to work with other experts on our team or learn why we have off the charts team member satisfaction score, then see our Careers page.

- If you know another marketer who’d enjoy reading this page, share it with them via email, Linkedin, Twitter, or Facebook.